OCTA-based AMD Stage Grading Enhancement via Class-Conditioned Style Transfer

Published in International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), 2024

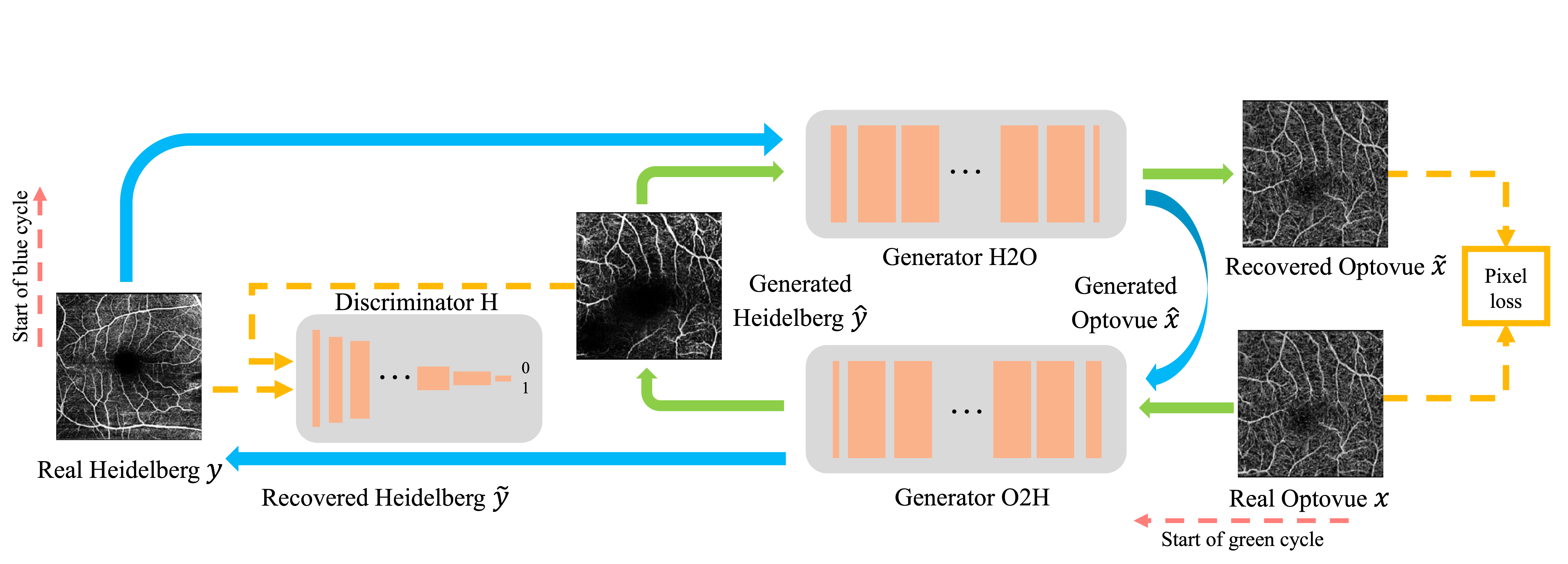

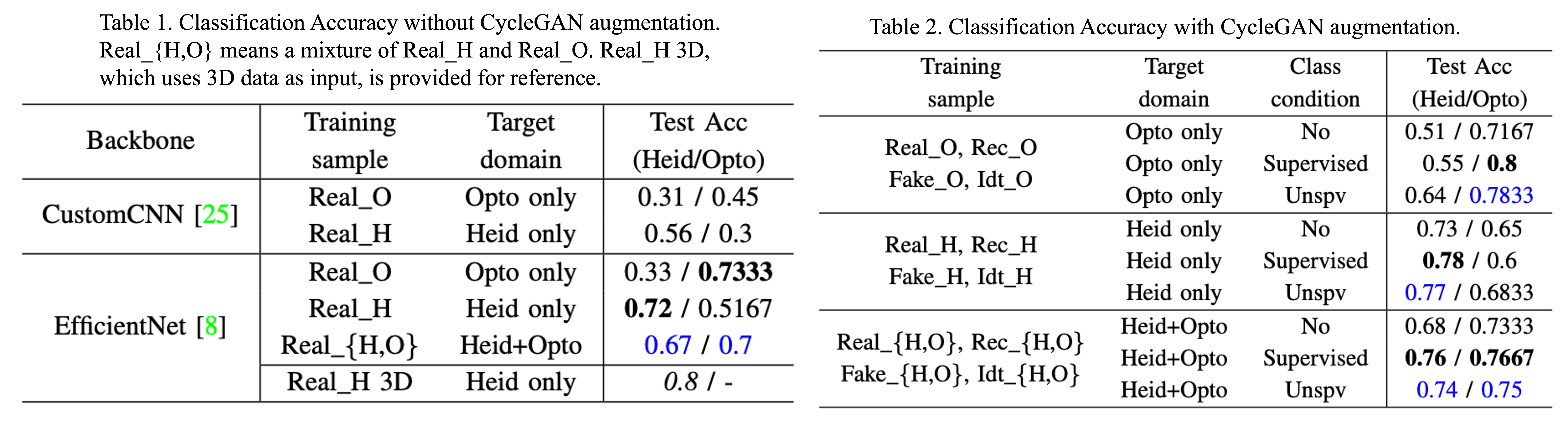

In this paper, we enable AMD stage classifier training on cross-instrument OCTA data (Heidelberg and Optovue). Because the Heidelberg and Optovue datasets contain retinas from different patients, paired data are unavailable. To address this limitation, we employ the CycleGAN framework, which compensates for the lack of paired training samples by introducing a cycle-consistency constraint.

In summary, the vanilla training loss of CycleGAN without class constraint is:

$ l_{cyclegan}(x,y) = l_{cyc}(x,\tilde{x}) + l_{cyc}(y,\tilde{y}) + \alpha( l_{GAN}(x, \hat{x}) + l_{GAN}(y, \hat{y})) + \beta ( l_{idt}(x, G_{H2O}(x)) + l_{idt}(y, G_{O2H}(y))) $

where $\alpha, \beta$ are loss weights.

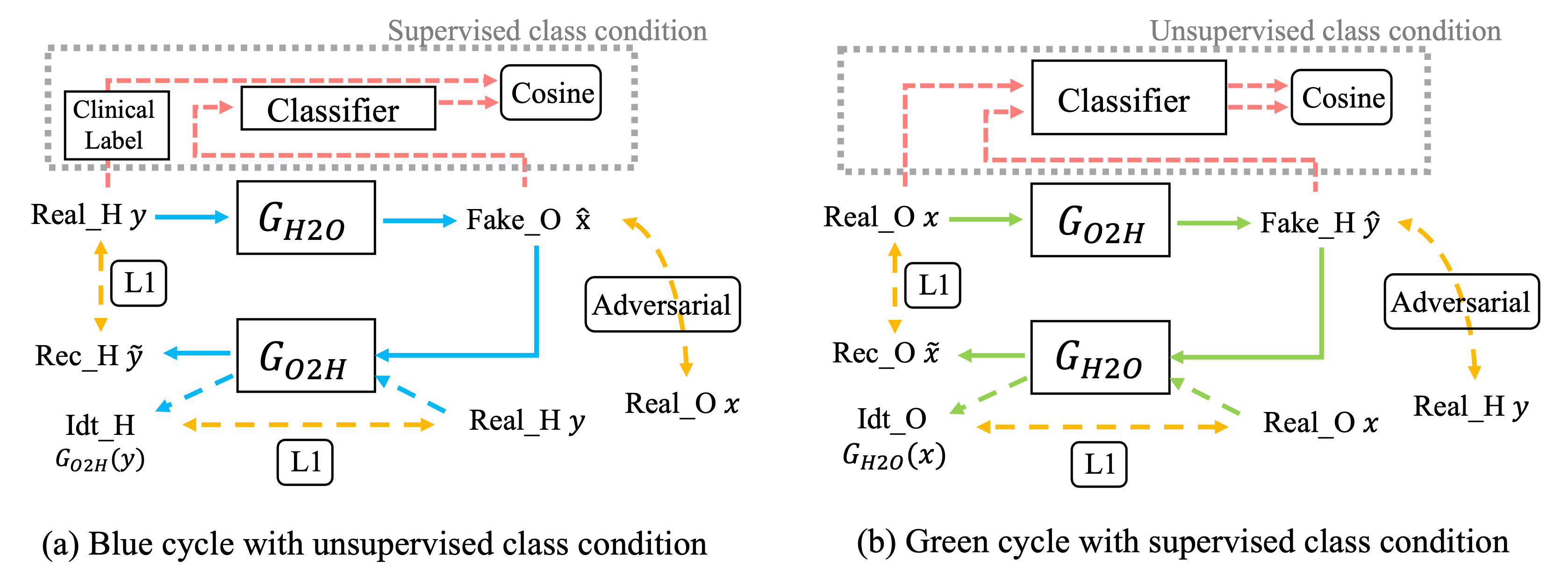

To better support classifier training, we further propose explicit class constraints—applied in both supervised and unsupervised settings—to enhance the machine-recognition quality of the translated images.

Combined with the vanilla loss, the final CycleGAN training loss with supervised class constraints is:

$l_{total} = l_{cyclegan}(x,y) + \gamma( l_{cos}(label(x), cls(\hat{y})) + l_{cos}(label(y), cls(\hat{x})))$

And the final CycleGAN training loss incorporating unsupervised class constraints is:

$l_{total} = l_{cyclegan}(x,y) + \gamma( l_{cos}(cls(x), cls(\hat{y})) + l_{cos}(cls(y), cls(\hat{x})))$

where $\gamma$ is loss weight, $cls(\cdot)$ is a pretrained feature extractor.

Experimental results show that the CycleGAN-based translator becomes particularly effective when trained with these class-related constraints.

Please also refer to our clinical paper

Citation:

@inproceedings{zhang2024octa,

title={OCTA-based AMD Stage Grading Enhancement via Class-Conditioned Style Transfer},

author={Zhang, Haochen and Heinke, Anna and Broniarek, Krzysztof and Galang, Carlo Miguel B and Deussen, Daniel N and Nagel, Ines D and Michalska-Ma{\l}ecka, Katarzyna and Bartsch, Dirk-Uwe G and Freeman, William R and Nguyen, Truong Q and others},

booktitle={International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC)},

pages={1--5},

year={2024}

}

@article{heinke2024cross,

title={Cross-instrument optical coherence tomography-angiography (OCTA)-based prediction of age-related macular degeneration (AMD) disease activity using artificial intelligence},

author={Heinke, Anna and Zhang, Haochen and Broniarek, Krzysztof and Michalska-Ma{\l}ecka, Katarzyna and Elsner, Wyatt and Galang, Carlo Miguel B and Deussen, Daniel N and Warter, Alexandra and Kalaw, Fritz and Nagel, Ines and others},

journal={Scientific Reports},

volume={14},

number={1},

pages={27085},

year={2024}

}